Multivariate Experiments

Creating an experiment

Experiments allow you to identify the best version of a marketing campaign to achieve your goals. When creating a flow in Simon, you can create an experiment to test different variations of the campaign.

Configuring an experiment

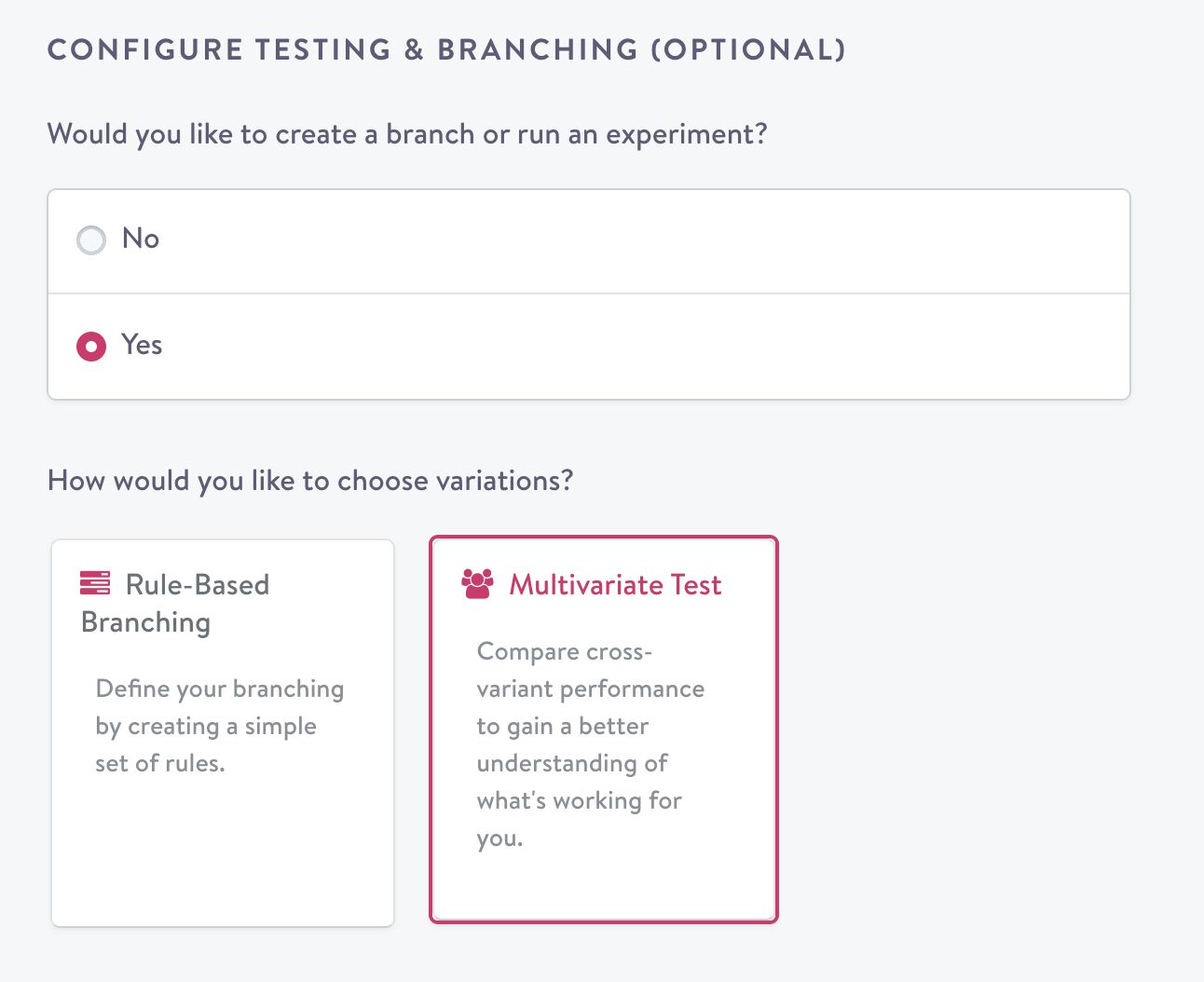

Clicking "Yes" under "Configure Testing (Optional)" opens the experiment configuration:

Selecting experimentation as an option within a flow.

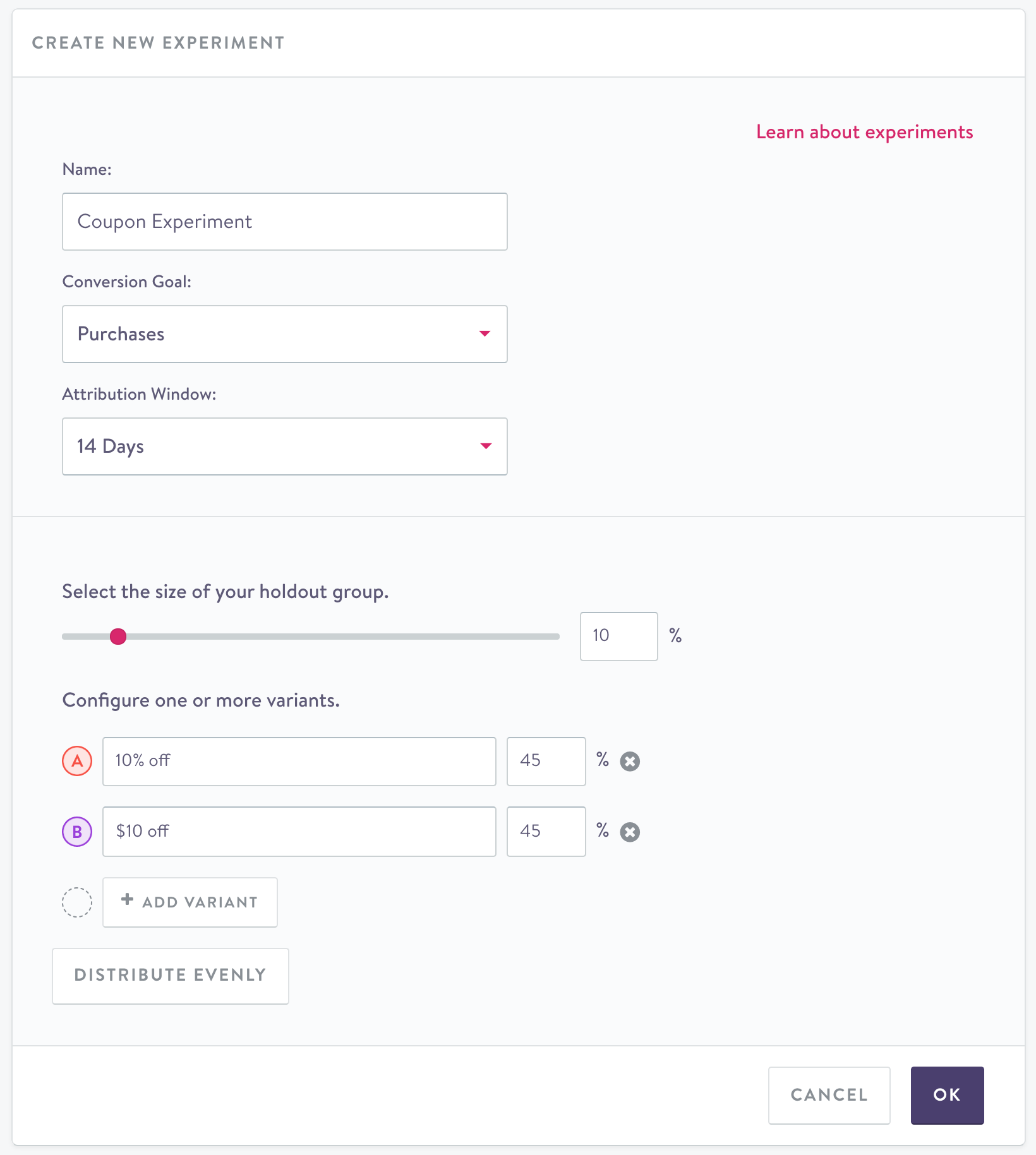

To set up an experiment, you start by inputting a name, followed by a conversion goal and associated attribution window. Setting a conversion goal for a campaign allows you to see how many users performed a specific action after receiving the campaign. The attribution window designates the time frame in which the event can happen after the campaign was sent.

Holdout group

Contacts that are selected for the holdout group will not be targeted by any actions you attach to the flow; this is the case whether or not you select multiple variants.

For example, if the action is adding contacts to a particular MailChimp Automation Workflow and holdout group size set to 10%, 10% of the contacts that are added to this flow will never be added to the selected Automation Workflow.

You can select any value between 0 and 100% for the holdout group size. As a best practice, a balanced control set of 50% can work well for a single-variant flow, and a holdout group of 10-20% can work well as a holdout in an experiment with multiple variants, or in one that's targeting a large number of contacts (more than 250,000).

Holdouts in journeysWhen using holdouts in journeys, it is important to think through how it affects downstream flows. If you maintain the experiment in downstream flows, those contacts that are in holdout will continue to be so. However, if you do not maintain the experiment, contacts in the holdout will be eligible for the actions that you set up in the journey.

Variants

Variants allow contacts in a segment to enter into differing actions. For example, contacts can receive sends that occur with different creative or coupon amounts. Variants can be thought of as "treatment" groups.

Variants can be given a meaningful name in the free text field and removed by clicking the (x) to the right of the free text field.

By default, variants will be distributed evenly less the holdout group. For example, if you have a 20% holdout group and two variants, each variant will be sent to 40% of contacts. You can also change the allocation of these variants.

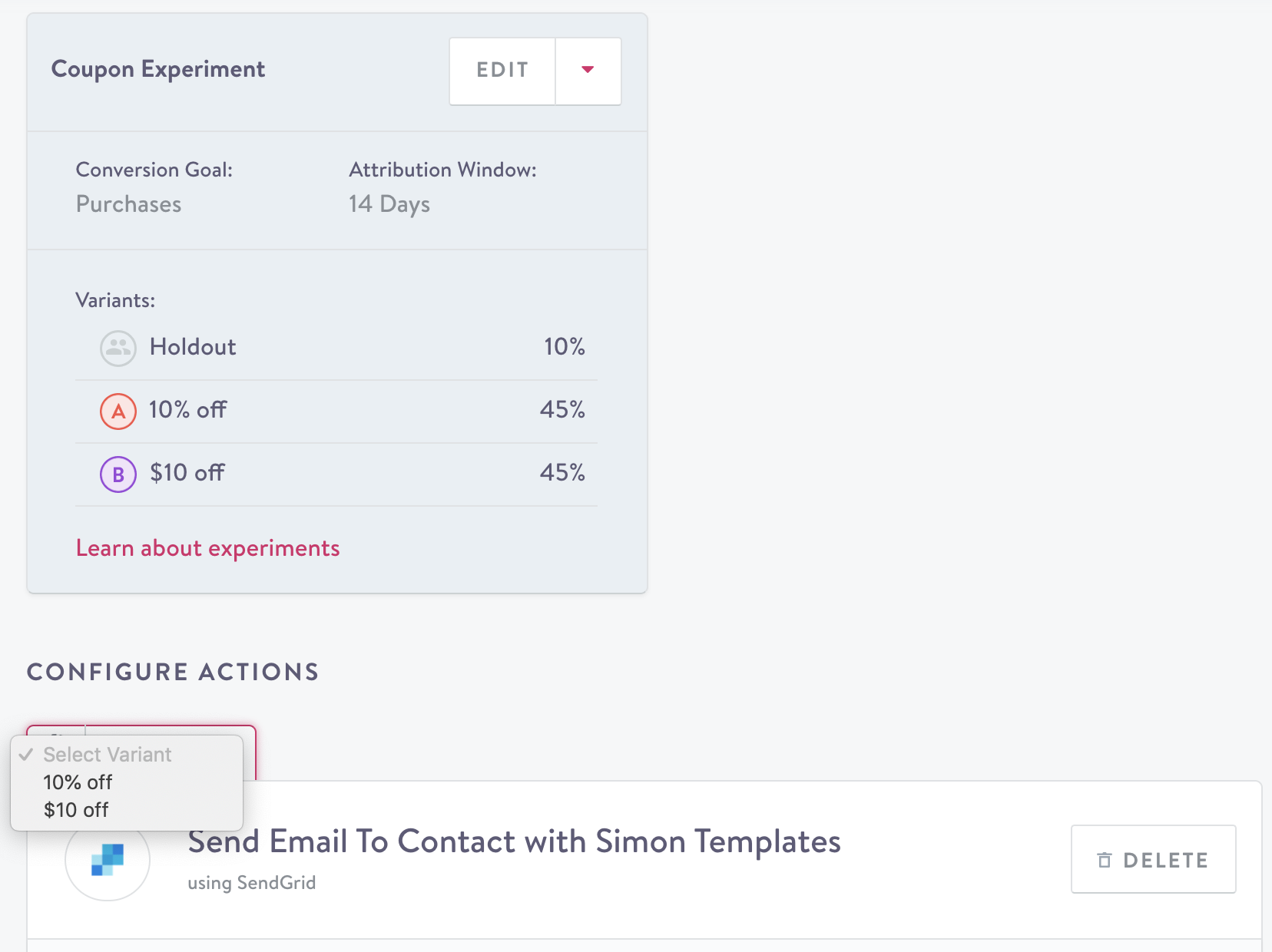

Once you've named all the variants you want to use as part of an experiment, the next step is to assign the variants to actions in the flow.

Creating a new experiment

Variant MaximumExperiments can have up to 13 variants.

Assigning actions to variants

If you've added variants to your experiment, you'll need to assign each action to a variant. (This step does not apply if you did not add any variants since each action is assumed to be part of the "treatment" group behind the scenes.) To do this, click on the "Select Variant" dropdown above an action and choose a variant. Note that if you try to save a flow that has variants before assigning your actions to them, you'll get an error. Now when you launch the flow, each action will only be applied to the contacts in the variant that it has been assigned.

Once you've added some actions and assigned each of them to a variant (for flows that have variants), you can save the flow.

Attaching a channel action to a variant

Coordinating experiments across multiple flows

Cross-flow experiments allow you to ensure that a given contact will be assigned to the same group (either holdout or variant) across a number of distinct flows. When you are in the flow builder, you will see the option to reference an existing experiment from the drop-down menu or create a new experiment. This can help ensure a consistent customer experience across flows. Keep in mind that drawing conclusions from the experiment will be more difficult if the audiences are not the same or other variables are introduced.

Cross-flow experiment results

Once both flows are set up with the experiment, you can visit the experiment results page to see the performance of the experiment on both flows in aggregate, as well as on individual flows.

Updated 7 months ago