Contact Event and Object Datasets

Contact Event vs. Contact Object

When your customer adds something to their cart, makes a purchase, opens an email from you, and other web based actions - this is called a Contact Event. Contact Events are web actions taken by your customer base.

The Contact Event dataset is best suited for tracking events through a customer’s lifecycle that may be used for Segmentation, like email opens, product view, and purchases. Other uses for Contact Event datasets are:

- Use in the Unified Contact View on the event timeline to understand a contact’s actions taken over time

- Use for Results or Conversion Reporting to track the intended action a contact may take after a campaign is sent to them.

Contact Objects are best suited for attributes or properties that have a many-to-one relationship with a customer that may not have an associated record timestamp. Some common contact objects are accounts or businesses.

Some considerations before you create a dataset

- You can't delete a field after you commit your dataset; only your account manager can. Be sure to pre-plan your dataset before clicking Commit.

- If you're using an identifier other than

To create and configure a Contact Event dataset, you need SQL and relational database knowledge. Contact your account manager if you need support.

Create a contact event or object dataset

Step one: choose your dataset details

- From the left navigation, expand Datasets, then click Datasets.

- Click Create Dataset.

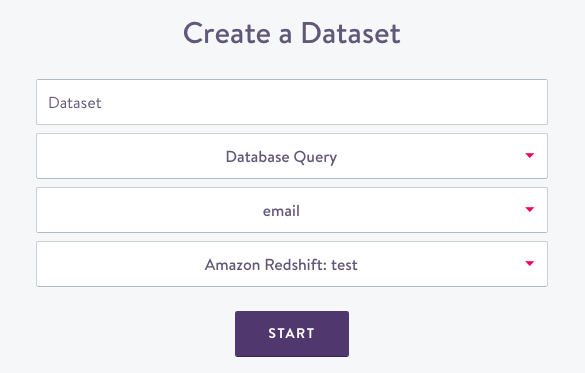

- Choose Contact Event or Contact Object dataset type then click Next. The Create a Dataset screen displays:

Dataset source

- Name your dataset; choose something new and unique for future organization.

- Select the data source type.

- Pick an identifier for the dataset. (If you need an identifier other than

email, first create an Identity Dataset). - Select the specific integration to query. This can be from any of your connected databases.

- Select an event category. Event categories are used to classify event types on our Unified Contact View event timeline. For example, email sends, opens, and clicks are all categorized together as Email Events. You can edit this option later in the Settings tab.

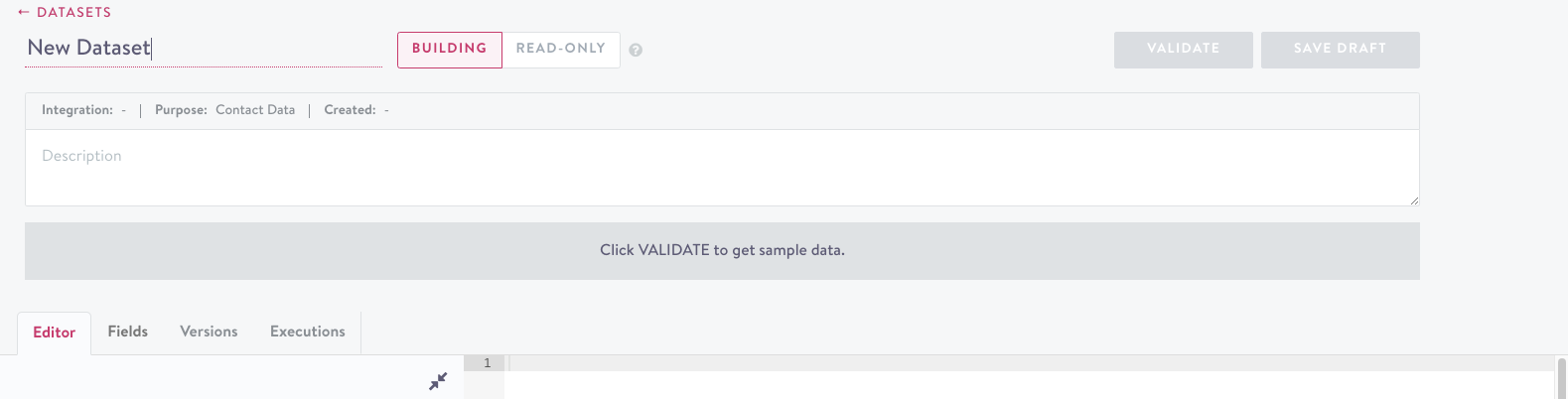

- Click Start. The dataset details screen displays:

dataset details

- A query template displays in the editor tab to get you started. Feel free to use this or delete it and write your query. Use the Database Schema window on the left to identify or search available field names:

Database Schema

Step two: validate

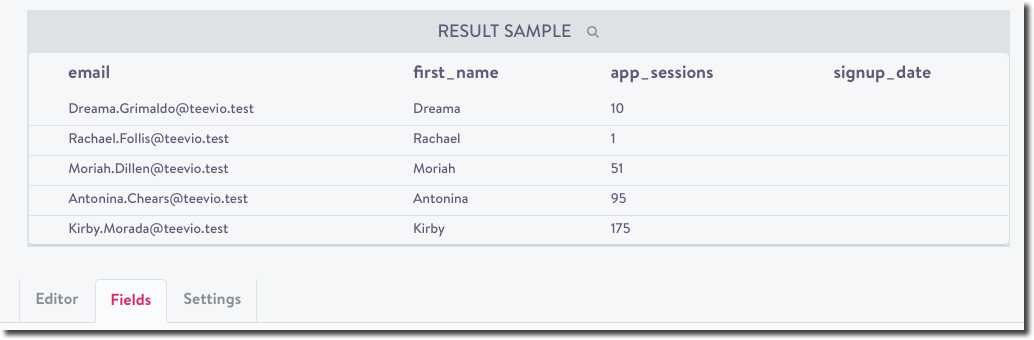

- Click Validate. This checks that the dataset is ingestible by Simon and if so, returns a small sample:

sample data displays

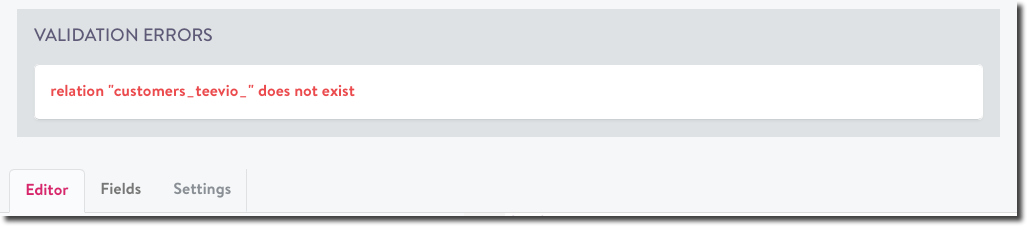

- Correct any validation errors if necessary:

validation errors

See Dataset validation for more details.

Step three: configure fields

You can give each field a Condition Name to be used in the segment builder.

- Click the Fields tab. (You won't be able to click this until your query is validated. See above.)

Data Types

- All fields require a data type for the Simon model. From the first drop down, choose one of these supported types:

- boolean

- currency

- float

- integer

- positive integer

- big integer

- string (< 255 characters)

- text (255 characters)

- date

- rate

- set

Choosing data typesWhen choosing a data type, consider the different operators that you'll need later (e.g. ‘greater than’ for integers, ‘contains’ for strings). In some cases how the field is saved in your database will differ from how it is saved in Simon. For example, an

order IDmay be an integer in your database, however it may make more sense to save it as astringin Simon since you won't be using any arithmetic operators.

- Enter a condition name. The field name is included by default.

- From the drop-down, select any associated lookup. See Lookup tables.

- Click the last carrot on the right to update the description:

edit description

Note on implied null values

In some cases null values are unavoidable. However, it is often the case that a null value implies useful information. For example, a contact without any purchases may return null as their total purchased amount, but it can be implied that their total purchased amount is $0. Simon Data supports implied values that have different defaults based on data types:

| Type | Default Implied Value |

|---|---|

| Numeric | 0 |

| Boolean | False |

| String/Text | Null |

These implied values can be overridden on a per-field basis. To do so, please contact your account manager.

Step four: settings

The settings tab configures how the data should be used in Simon and configurations for how we pull data into Simon.

- Click the settings tab (previously known as rules) and complete:

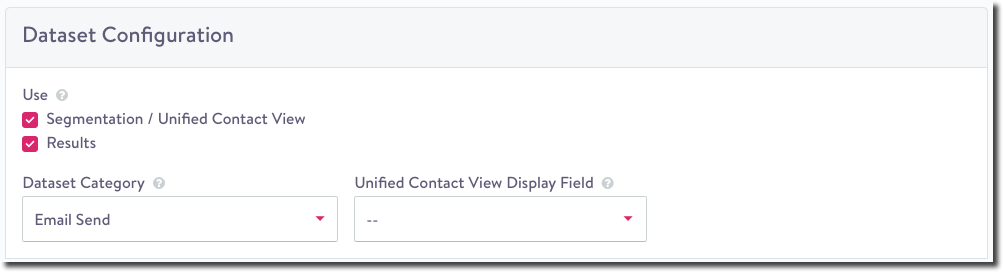

Dataset Configuration

Dataset Configuration describes how data is used (only for Contact Events).

-

Under Use, check if you'd like to use this dataset in Segmentation and/or Results. They are both selected by default:

- If the dataset is marked for Segmentation / Unified Contact View, all fields are used in both segmentation and campaign content.

- If the dataset is marked for Results, see Create Results for more detail on how to use the dataset in measuring results.

-

Dataset category determines how the event is categorized in the Unified Contact View and the Lookback period.

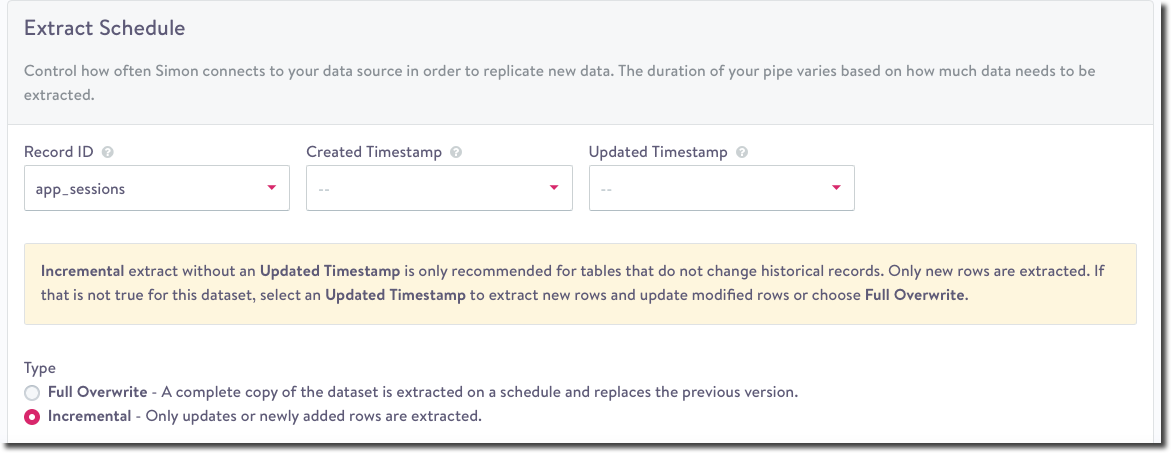

Extract Schedule

The Extract Schedule defines how we pull data into Simon.

extract schedule

- Select the Record ID, the unique event identifier. Keep in mind:

- Any records with a null record ID will be filtered out.

- This field must be unique per record and any duplicates will be removed.

- Record IDs must not contain the '|' character and must be under 255 characters in length.

For example:

Redshift

SELECT md5(user_id || created_at::varchar || purchase_type) as record_id

Not all datasets will have an obvious unique identifier such asevent_idIn these cases you will need to derive a unique identifier manually. To do this you must identify a set of properties in the dataset that uniquely identify an event - such as the user, timestamp, and other event properties if necessary. These fields can then be concatenated together into a single string and passed into a hashing function to produce a consistent alphanumeric identifier.

- From the drop-downs, choose a Created Timestamp and Updated Timestamp. Timestamp fields must be epoch second formatted to display the proper date throughout the application.

- Fields selected for these configurations require additional validations. After choosing or updating Create Timestamp or Updated Timestamp, click Validate again. You'll see an error message if the following conditions aren't met:

- Timestamps can't be in the future.

- Timestamps can't contain milliseconds.

- Timestamps can't be null.

- Updated Timestamp must be greater or equal to Created Timestamp.

- Choose one extraction type. When Simon pipes run, they copy data into the platform. There are two extract types Full Overwrite and Incremental. Both require a unique Record ID to ensure proper de-duping:

- Full Overwrite: A complete copy of the table is extracted on a schedule and replaces the previous version. This is the only extract strategy that captures any deletions of existing rows. Full Overwrites have high latency because the pipe durations take longer.

- Incremental: For tables that do not change historical records, Incremental is the recommended choice. As long as the table has a created timestamp it's easy to grab the newest rows every hour. If an updated timestamp is available, Simon can pull in updated or newly added rows. This option doesn't support row deletions. Editing the dataset's SQL will cause a backfill of the entire dataset the next time the dataset updates.

Step five: save and commit

If the dataset is valid, click Save to create it. At this point, the dataset won't be ingested by the Simon data pipe, and you may leave the page and come back to continue working on it. The Dataset is now in the develop status (see Dataset Lifecycle).

To make the dataset live and begin ingesting data, click Commit. This creates the new fields and associates them with the dataset.

After this step, the dataset must always contains fields with these names.It now has a status of live and will be picked up by the next run of the Simon pipe. You can’t delete the field.

You can swap the field in the SQL, but it needs to have the same alias.

Step six: extract validation

The settings tab contains dataset-level and field-level validation checks to ensure the dataset can be successfully ingested by Simon for use in your account. These options will only become available after you Commit the dataset. Validation failures result in a failed extract that generates an Action Panel item. See Dataset Validation for more details.

Other dataset tabs

- Versions: View all versions of this query including author and creation date and time

- Executions: View all run details (date, time, execution length, rows returned)

- Lineage: See Data Lineage

Dataset notifications and alerts

You can receive custom notifications and alerts about what your datasets are doing. See Configure Simon notifications and alerts.

Contact Event Dataset category lookback periods

| Contact Event Dataset Category | Lookback classification | Lookback period |

|---|---|---|

| Other | Behavioral | 30 days |

| Add to Cart | Behavioral | 30 days |

| Abandoned Cart | Behavioral | 30 days |

| Page view | Behavioral | 30 days |

| On-site Activities | Behavioral | 30 days |

| App Activities | Behavioral | 30 days |

| Survey Completion | Behavioral | 30 days |

| Registration | Behavioral | 30 days |

| Email Send | Channel Events | 90 days |

| Email Open | Channel Events | 90 days |

| Email Click | Channel Events | 90 days |

| Email Unsubscribe | Channel Events | 90 days |

| Email Bounce | Channel Events | 90 days |

| SMS Send | Channel Events | 90 days |

| SMS Click | Channel Events | 90 days |

| SMS Unsubscribe | Channel Events | 90 days |

| SMS Bounce | Channel Events | 90 days |

| Push Send | Channel Events | 90 days |

| Push Delivery | Channel Events | 90 days |

| Push Click | Channel Events | 90 days |

| Push Bounce | Channel Events | 90 days |

| Flow Adds | Channel Events | 90 days |

| App Download | Transactional | 365 days |

| App Uninstall | Transactional | 365 days |

| Subscription Activation | Transactional | 365 days |

| Subscription Skip | Transactional | 365 days |

| Subscription Cancellation | Transactional | 365 days |

| Subscription Reactivation | Transactional | 365 days |

| Completed Transaction | Transactional | 365 days |

| Purchase | Transactional | 365 days |

| Return | Transactional | 365 days |

Updated 5 months ago